OpenAI unveiled a new text-to-image conditional generative model dubbed Shap-E that can generate high-quality 3D assets based on text descriptions.

Unlike conventional models, Shap-E generates the parameters of implicit functions that can be rendered as both textured meshes and neural radiance fields (NeRF). The new model can produce high-quality 3D models in a fraction of time.

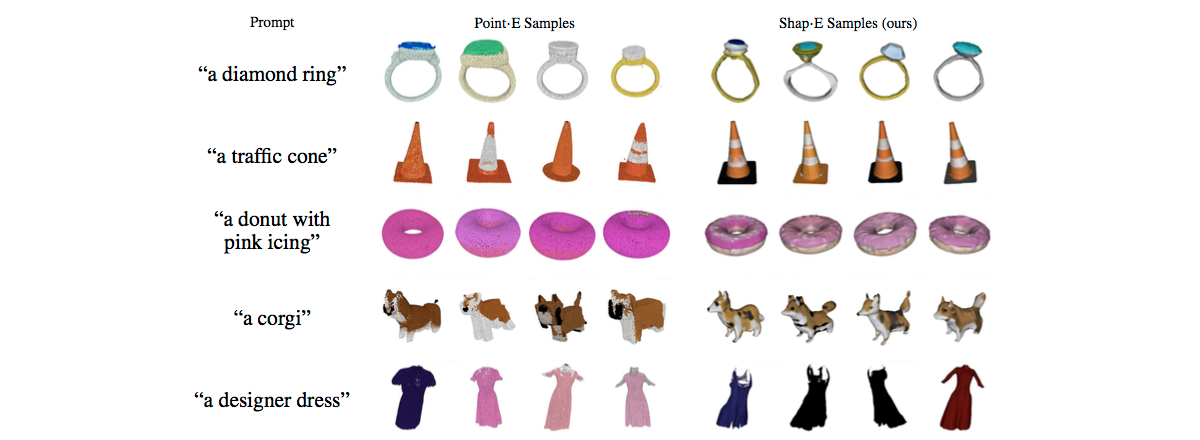

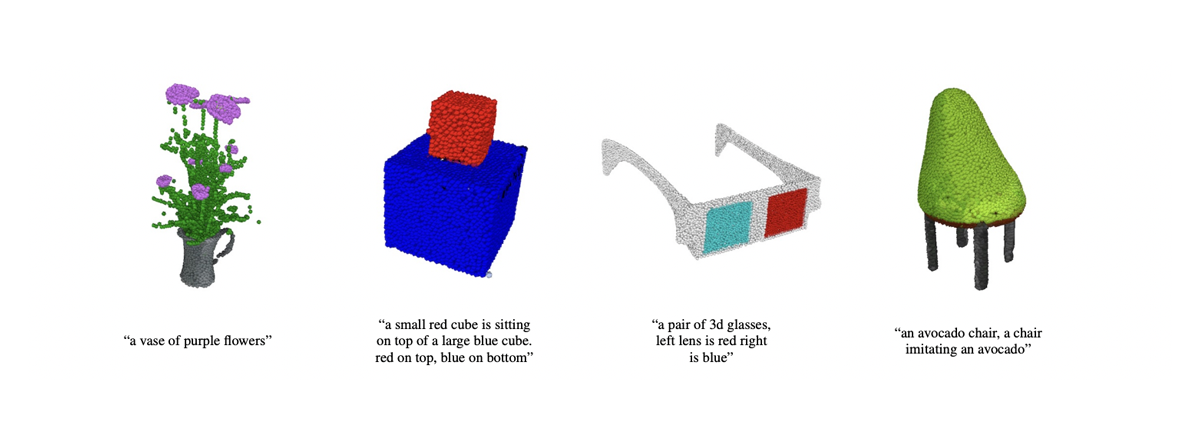

The team has shared several examples of the results Shap-E can generate, including 3D results for text prompts like a diamond ring, a corgi, a designer dress, etc.

Shap-E can be used to produce video game assets, build models for scientific research or engineering simulations, etc.

Shap-E is available on GitHub, and it's free.